CAST: Effective and Efficient User Interaction for Context-Aware Selection in 3D Particle Clouds

Description:

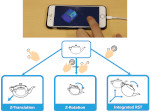

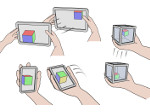

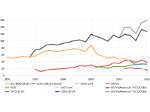

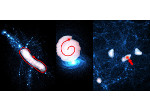

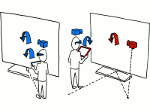

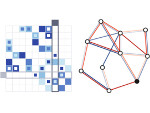

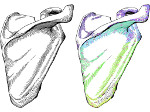

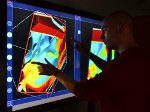

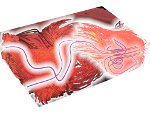

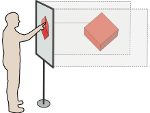

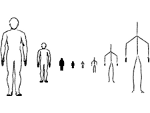

We present a family of three interactive Context-Aware Selection Techniques (CAST) for the analysis of large 3D particle datasets. For these datasets, spatial selection is an essential prerequisite to many other analysis tasks. Traditionally, such interactive target selection has been particularly challenging when the data subsets of interest were implicitly defined in the form of complicated structures of thousands of particles. Our new techniques SpaceCast, TraceCast, and PointCast improve usability and speed of spatial selection in point clouds through novel context-aware algorithms. They are able to infer a user’s subtle selection intention from gestural input, can deal with complex situations such as partially occluded point clusters or multiple cluster layers, and can all be fine-tuned after the selection interaction has been completed. Together, they provide an effective and efficient tool set for the fast exploratory analysis of large datasets. In addition to presenting Cast, we report on a formal user study that compares our new techniques not only to each other but also to existing state-of-the-art selection methods. Our results show that Cast family members are virtually always faster than existing methods without tradeoffs in accuracy. In addition, qualitative feedback shows that PointCast and TraceCast were strongly favored by our participants for intuitiveness and efficiency.

Paper download:  (7.5 MB)

(7.5 MB)

Demo:

You can download a demo of the CAST selection (for Win32, including a example datasets, 42MB) to try it out for yourself. To be fully functional, however, the demo requires a TUIO-based touch surface.

Videos:

30 second preview:

paper presentation at SciVis 2015:

Get the videos:

- download the video (720p25, MPEG4, 78.8MB),

- watch the video on YouTube,

- watch the 30s preview on Vimeo,

- watch the paper presentation video on Vimeo.

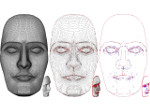

Pictures:

Cross-reference:

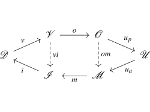

This paper extends our previous Cloud-Lasso selection technique, see the page on that paper as well.

Additional material:

Reference:

This work was done in collaboration with Hangzhou Dianzi University, Zhejiang, China, and the University of Groningen, the Netherlands. Also see Lingyun Yu's page on this project.