Efficient Structure-Aware Selection Techniques for 3D Point Cloud Visualizations with 2DOF Input

Description:

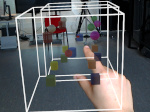

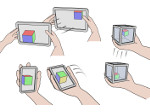

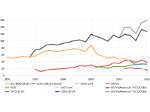

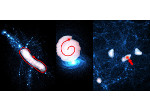

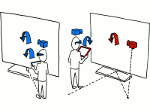

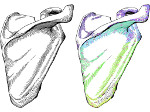

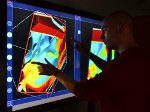

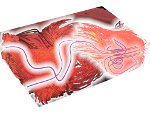

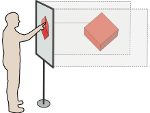

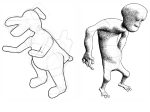

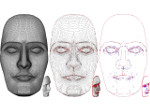

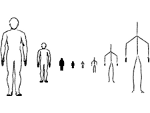

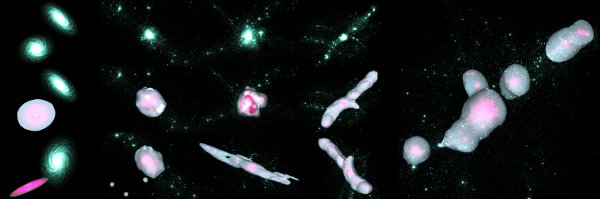

Data selection is a fundamental task in visualization because it serves as a pre-requisite to many follow-up interactions. Efficient spatial selection in 3D point cloud datasets consisting of thousands or millions of particles can be particularly challenging. We present two new techniques, TeddySelection and CloudLasso, that support the selection of subsets in large particle 3D datasets in an interactive and visually intuitive manner. Specifically, we describe how to spatially select a subset of a 3D particle cloud by simply encircling the target particles on screen using either the mouse or direct-touch input. Based on the drawn lasso, our techniques automatically determine a bounding selection surface around the encircled particles based on their density. This kind of selection technique can be applied to particle datasets in several application domains. TeddySelection and CloudLasso reduce, and in some cases even eliminate, the need for complex multi-step selection processes involving Boolean operations. This was confirmed in a formal, controlled user study in which we compared the more flexible CloudLasso technique to the standard cylinder-based selection technique. This study showed that the former is consistently more efficient than the latter—in several cases the CloudLasso selection time was half that of the corresponding cylinder-based selection.

Paper download:  (4.9 MB)

(4.9 MB)

Erratum:

In the paper on page 7 (page 2251 in the journal), the formula for the F1 score needs to be corrected to F1=2·P·R/(P+R); i.e., we had missed the factor of 2. The actual values, however, were correctly computed in Table 2, simply the formula was incorrectly reported.

Demo:

You can download a demo of the CloudLasso selection (for Win32, including a example datasets, 21MB) to try it out for yourself. To be fully functional, however, the demo requires a TUIO-based touch surface.

Video:

Get the video:

- download the video (AVI-MPEG4, 62.6MB)

- download a smaller version of the video (AVI-MPEG4, 21.4MB)

- watch the video at YouTube

Pictures:

Cross-reference:

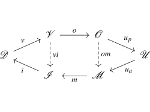

This paper was later extended to the CAST family of selection techniques, see the page on that paper as well. Also, our CloudLasso technique has since been incorporated into the open-source software SlicerAstro by Punzo et al. [2017].

Additional material:

- slides from the SciVis 2012 presentation (PDF with embedded videos, 61.6MB)

- Lingyun Yu's page on this project

Main Reference:

Other Reference:

| Lingyun Yu (2013) Touching 3D Data: Interactive Visualization of Cosmological Simulations. PhD thesis, University of Groningen, The Netherlands, June 2013. | | ||

This work was done at the Scientific Visualization and Computer Graphics Lab of the University of Groningen, the Netherlands. Also see Lingyun Yu's page on this project.