Point Specification in Collaborative Visualization for 3D Scalar Fields Using Augmented Reality

Description:

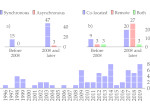

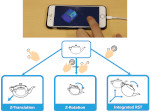

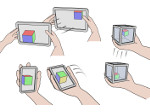

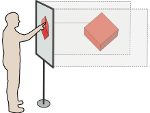

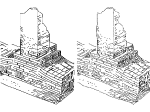

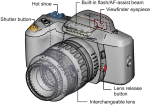

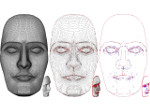

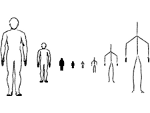

We compared three techniques to specify 3D positions for collaborative augmented reality (AR) visualization. AR Head-Mounted Displays allow multiple users to share the same physical space, while keeping seamless social interactions. Interactions being key parts of exploratory visualization tasks, we adapted from the Virtual Reality literature three distinct techniques to specify points in 3D space, such as for placing annotations for which they cannot rely on existing data objects. We evaluated these techniques on their accuracy and speed, the user’s subjective workload and preferences, as well as their co-presence, mutual understanding, and behavior in collaborative tasks. Our results suggest that all the three techniques provide good mutual understanding and co-presence among collaborators. They differ, however, in the way users behave, their accuracy, and their speed.

Paper download:  (9.9 MB)

(9.9 MB)

Software:

The software is available at https://github.com/MickaelSERENO/SciVis_Server/tree/CHI2020.

Study materials and data:

Our study materials and data can be found in the following OSF repositories: osf.io/43j9g and osf.io/7a6yr.

Video:

Get the video:

Pictures:

Main Reference:

Other Reference:

| Mickaël Sereno (2021) Collaborative Data Exploration and Discussion with Augmented Reality Support. PhD thesis, Université Paris-Saclay, France, December 2021. | | ||

This work was done at the AVIZ project group of Inria, France.